As organizations rush to adopt LLMs and AI‑powered agents, new security risks are emerging—prompt injection, data leakage, model manipulation, unsafe outputs, API abuse, and more. In this Barracuda webinar, Senior Product Manager Avin and Field Marketing Manager Shihao walk through how attackers exploit AI interfaces, and demonstrate how Barracuda’s LLM Protection secures prompts, policies and agent behavior in real time.

What you’ll learn in this session:

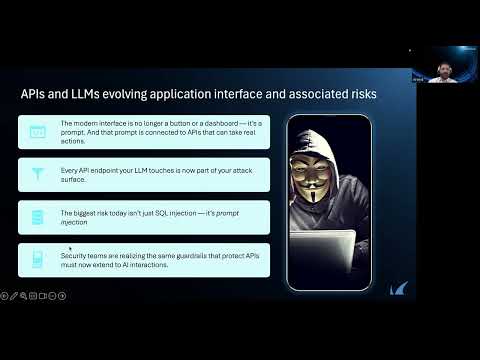

– The rapid shift from traditional UI to agent‑driven AI interfaces

– Why every API your LLM touches becomes part of the attack surface

– Live demos of real risk: unsafe prompts, data leaks, manipulated responses, DOS via oversized inputs

– How attackers exploit LLMs with prompt injection, system prompt leakage, supply‑chain poisoning, and excessive agency

– A walkthrough of the OWASP Top 10 for LLM Applications and what each risk means for your environment

– Policy enforcement, guardrails, and real‑time remediation using Barracuda’s Web Application Firewall & LLM Protection

– How Barracuda stops sensitive data exposure, blocks malicious prompts and prevents output‑based exploits

– Why AI security must evolve from protecting applications to protecting interactions